To utilize NIC teaming, two or more network adapters must be uplinked to a virtual switch. The main advantages of NIC teaming are:

- Increased network capacity for the virtual switch hosting the team.

- Passive failover in the event one of the adapters in the team goes down.

- Route based on the originating port ID: Choose an uplink based on the virtual port where the traffic entered the virtual switch.

- Route based on an IP hash: Choose an uplink based on a hash of the source and destination IP addresses of each packet. For non-IP packets, whatever is at those offsets is used to compute the hash.

- Route based on a source MAC hash: Choose an uplink based on a hash of the source Ethernet.

- Use explicit failover order: Always use the highest order uplink from the list of Active adapters which passes failover detection criteria.

- Route based on physical NIC load (Only available on Distributed Switch): Choose an uplink based on the current loads of physical NICs.

- The default load balancing policy is Route based on the originating virtual port ID. If the physical switch is using link aggregation, Route based on IP hash load balancing must be used.

- LACP support has been introduced in vSphere 5.1 on distributed vSwitches and requires additional configuration.

- Ensure VLAN and link aggregation protocol (if any) are configured correctly on the physical switch ports.

To configure NIC teaming for standard vSwitch using the vSphere / VMware Infrastructure Client:

- Highlight the host and click the Configuration tab.

- Click the Networking link.

- Click Properties.

- Under the Network Adapters tab, click Add.

- Select the appropriate (unclaimed) network adapter(s) and click Next.

- Ensure that the selected adapter(s) are under Active Adapters.

- Click Next > Finish.

- Under the Ports tab,highlight the name of the port group and click Edit.

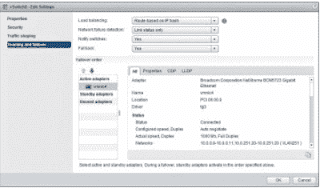

- Click the NIC Teaming tab.

- Select the correct Teaming policy under the Load Balancing field.

- Click OK.

- Under vCenter Home, click Hosts and Clusters.

- Click on the host.

- Click Manage > Networking > Virtual Switches.

- Click on the vSwitch.

- Click Manage the physical network adapters.

- Select the appropriate (unclaimed) network adapter(s) and use the arrow to move the adapter(s) to Active Adapters.

- Click Edit settings.

- Select the correct Teaming policy under the Load Balancing field.

- Click OK.

- From Inventory, go to Networking.

- Click on the Distributed switch.

- Click the Configuration tab.

- Click Manage Hosts. A window pops up.

- Click the host.

- From the Select Physical Adapters option, select the correct vmnics.

- Click Next for the rest of the options.

- Click Finish.

- Expand the Distributed switch.

- Right-click the Distributed Port Group.

- Click Edit Settings.

- Click Teaming and Failover.

- Select the correct Teaming policy under the Load Balancing field.

- Click OK.

- Under vCenter Home, click Networking.

- Click on the Distributed switch.

- Click the Getting Started tab.

- Under Basic tasks, click Add and manage hosts. A window pops up.

- Click Manage host networking.

- Click Next > Attached hosts.

- Select the host(s).

- Click Next.

- Select Manage physical adapters and deselect the rest.

- Click Next

- Select the correct vmnics.

- Click Assign Uplink > Next.

- Click Next for the rest of the options.

- Click Finish.

- Expand the Distributed Switch.

- Click Distributed Port Group. > Manage > Settings.

- Under Properties, click Edit.

- Click Teaming and failover.

- Select the correct Teaming policy underthe Load Balancing field.

- Click OK.